Repeated quantum error detection in a surface code. Quantum measurements and gates by code deformation. Fault-tolerant quantum computation with high threshold in two dimensions. Fault-tolerant quantum computation by anyons. 37th Conference on Foundations of Computer Science 56 pp (IEEE, 1996). Quantum computing in the NISQ era and beyond. Our demonstration of repeated, fast and high-performance quantum error-correction cycles, together with recent advances in ion traps 10, support our understanding that fault-tolerant quantum computation will be practically realizable. The measured characteristics of our device agree well with a numerical model. We find a low logical error probability of 3% per cycle when rejecting experimental runs in which leakage is detected.

Repeatedly executing the cycle, we measure and decode both bit-flip and phase-flip error syndromes using a minimum-weight perfect-matching algorithm in an error-model-free approach and apply corrections in post-processing. In an error-correction cycle taking only 1.1 μs, we demonstrate the preservation of four cardinal states of the logical qubit. Using 17 physical qubits in a superconducting circuit, we encode quantum information in a distance-three logical qubit, building on recent distance-two error-detection experiments 7, 8, 9. Here we demonstrate quantum error correction using the surface code, which is known for its exceptionally high tolerance to errors 3, 4, 5, 6. For fault-tolerant operation, quantum computers must correct errors occurring owing to unavoidable decoherence and limited control accuracy 2. All rights reserved.Quantum computers hold the promise of solving computational problems that are intractable using conventional methods 1. An integrative definition and conceptual model, intended as a tentative and flexible point of departure for future research, adds needed breadth, specificity, and precision to efforts to conceptualize and measure UT.Īmbiguity Conceptual analysis Intolerance Narrative review Tolerance Uncertainty.Ĭopyright © 2017 The Authors. Uncertainty tolerance is an important and complex phenomenon requiring more precise and consistent definition. We discuss how this model can facilitate further empirical and theoretical research to better measure and understand the nature, determinants, and outcomes of UT in health care and other domains of life.

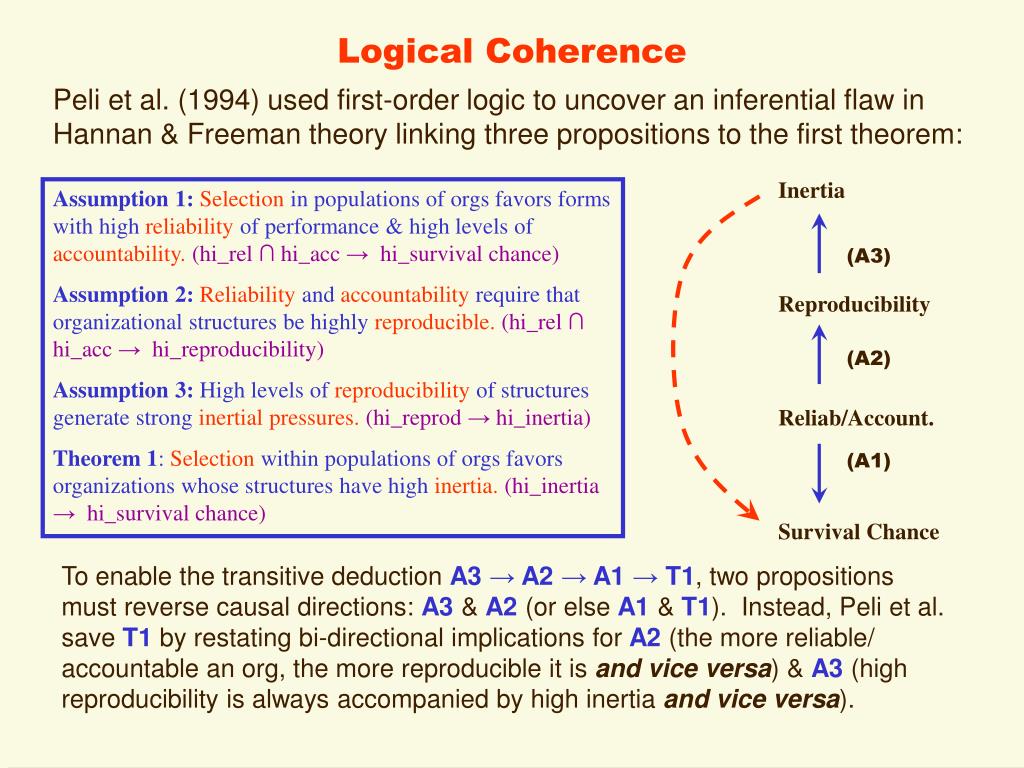

This model synthesizes insights from various disciplines and provides an organizing framework for future research. To address these gaps, we propose a new integrative definition and multidimensional conceptual model that construes UT as the set of negative and positive psychological responses-cognitive, emotional, and behavioral-provoked by the conscious awareness of ignorance about particular aspects of the world. A logically coherent, unified understanding or theoretical model of UT is lacking. The objectives of this study were to: 1) analyze the meaning and logical coherence of UT as conceptualized by developers of UT measures, and 2) develop an integrative conceptual model to guide future empirical research regarding the nature, causes, and effects of UT.Ī narrative review and conceptual analysis of 18 existing measures of Uncertainty and Ambiguity Tolerance was conducted, focusing on how measure developers in various fields have defined both the "uncertainty" and "tolerance" components of UT-both explicitly through their writings and implicitly through the items constituting their measures.īoth explicit and implicit conceptual definitions of uncertainty and tolerance vary substantially and are often poorly and inconsistently specified. Uncertainty tolerance (UT) is an important, well-studied phenomenon in health care and many other important domains of life, yet its conceptualization and measurement by researchers in various disciplines have varied substantially and its essential nature remains unclear.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed